diff --git a/.gitignore b/.gitignore

index 96760692..ce31fcc6 100644

--- a/.gitignore

+++ b/.gitignore

@@ -1,5 +1,7 @@

output/

dataset/

+test_report.md

+test_results/

root.log

report.log

*.sqlite*

diff --git a/README.rst b/README.rst

index 4b1b4ef3..56417b0c 100644

--- a/README.rst

+++ b/README.rst

@@ -85,3 +85,15 @@ joining the **PyAutoFit** `Slack channel `_, where

Slack is invitation-only, so if you'd like to join send an `email `_ requesting an

invite.

+

+Build Configuration

+-------------------

+

+The ``config/`` directory contains two files used by the automated build and test system

+(CI, smoke tests, and pre-release checks). These are not relevant to normal workspace usage.

+

+- ``config/build/no_run.yaml`` — scripts to skip during automated runs. Each entry is a filename stem

+ or path pattern with an inline comment explaining why it is skipped.

+- ``config/build/env_vars.yaml`` — environment variables applied to each script during automated runs.

+ Defines default values (e.g. test mode, small datasets) and per-script overrides for scripts

+ that need different settings.

diff --git a/config/build/env_vars.yaml b/config/build/env_vars.yaml

new file mode 100644

index 00000000..d956d4e5

--- /dev/null

+++ b/config/build/env_vars.yaml

@@ -0,0 +1,21 @@

+# Per-script environment variable configuration for automated runs

+# (smoke tests, pre-release checks, CI).

+#

+# "defaults" are applied to every script on top of the inherited environment.

+# "overrides" selectively unset or replace vars for matching path patterns.

+#

+# Pattern convention (same as no_run.yaml):

+# - Patterns containing '/' do a substring match against the file path

+# - Patterns without '/' match the file stem exactly

+

+defaults:

+ PYAUTOFIT_TEST_MODE: "2" # 0=normal, 1=reduced iterations, 2=skip sampler (fastest)

+ PYAUTO_WORKSPACE_SMALL_DATASETS: "1" # Cap grids/masks to 15x15, reduce MGE gaussians

+ PYAUTO_DISABLE_CRITICAL_CAUSTICS: "1" # Skip critical curve/caustic overlays in plots

+ PYAUTO_DISABLE_JAX: "1" # Force use_jax=False, avoid JIT compilation overhead

+ PYAUTO_FAST_PLOTS: "1" # Skip tight_layout() in subplots

+ JAX_ENABLE_X64: "True" # Enable 64-bit precision in JAX

+ NUMBA_CACHE_DIR: "/tmp/numba_cache" # Writable cache dir for numba

+ MPLCONFIGDIR: "/tmp/matplotlib" # Writable config dir for matplotlib

+

+overrides: []

diff --git a/config/build/no_run.yaml b/config/build/no_run.yaml

new file mode 100644

index 00000000..81649f7c

--- /dev/null

+++ b/config/build/no_run.yaml

@@ -0,0 +1,17 @@

+# Scripts to skip during automated runs (smoke tests, pre-release checks, CI).

+# Each entry is matched against script paths:

+# - Entries with '/' do a substring match against the file path

+# - Entries without '/' match the file stem exactly

+# Add an inline # comment to document the reason for skipping.

+

+- GetDist # Cant get it to install, even in optional requirements.

+- Zeus # Test Model Iniitalization no good.

+- ZeusPlotter # Test Model Iniitalization no good.

+- UltraNestPlotter # Test Model Iniitalization no good.

+- DynestyPlotter # Test Model Iniitalization no good.

+- start_point # bug https://github.com/rhayes777/PyAutoFit/issues/1017

+- tutorial_8_astronomy_example # Requires dataset/howtofit/chapter_1/astro/simple/data.npy (not auto-generated)

+- searches/mle/PySwarmsGlobal # PySwarms does not support JAX.

+- searches/mle/PySwarmsLocal # PySwarms does not support JAX.

+- searches/nest/UltraNest # UltraNest does not support JAX.

+- plot/PySwarmsPlotter # PySwarms does not support JAX.

diff --git a/projects/cosmology/example_1_intro.py b/projects/cosmology/example_1_intro.py

index f72f1def..8f89a264 100644

--- a/projects/cosmology/example_1_intro.py

+++ b/projects/cosmology/example_1_intro.py

@@ -1,372 +1,368 @@

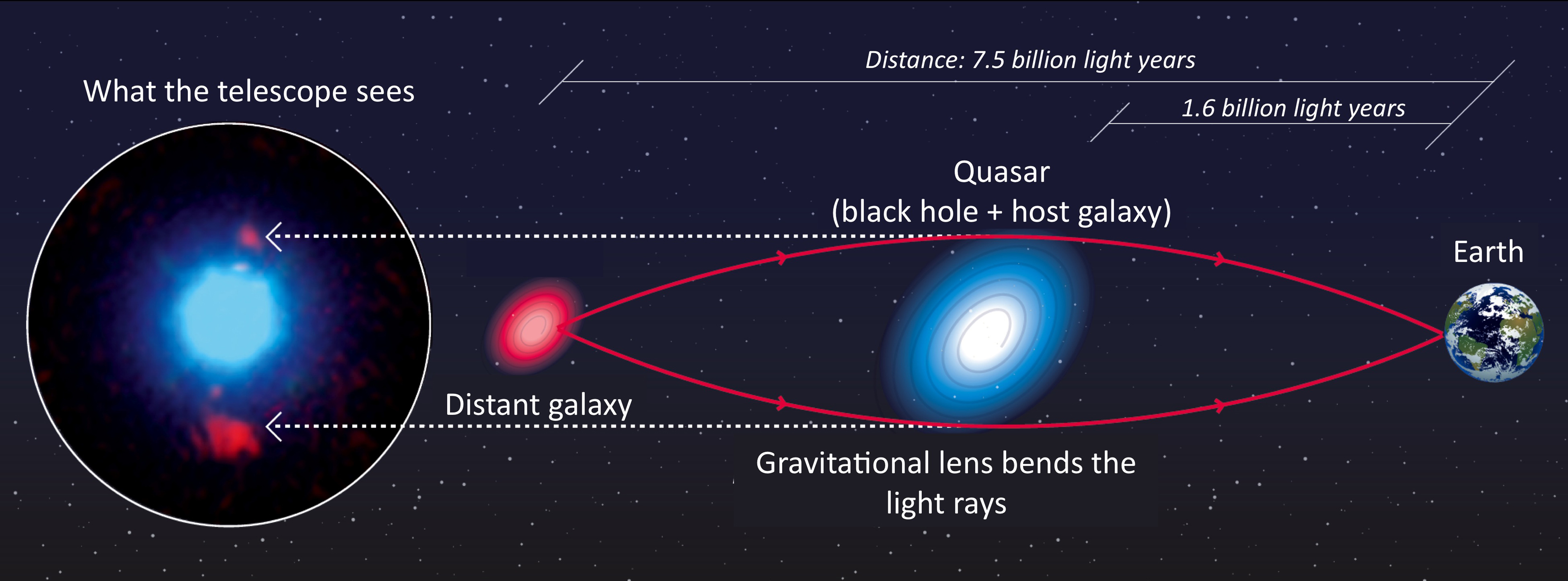

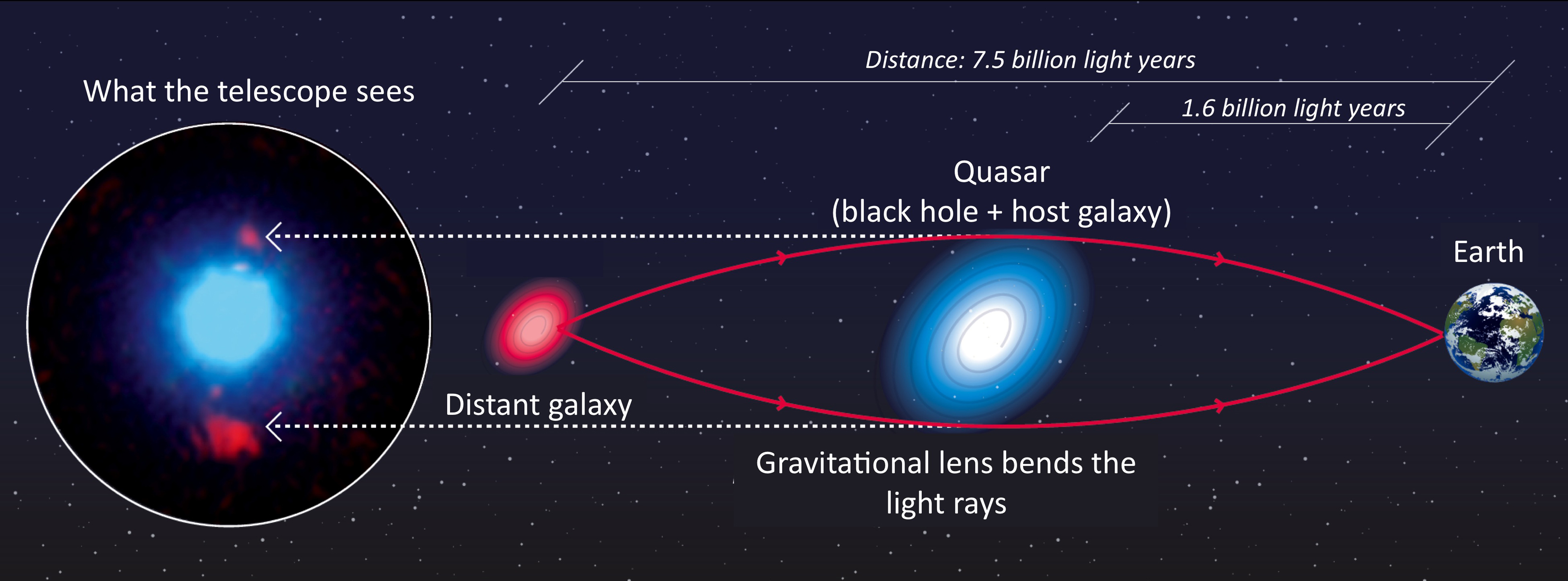

-"""

-Project: Cosmology

-==================

-

-This project uses the astrophysical phenomena of Strong Gravitational Lensing to illustrate multi-level model

-composition and fitting with **PyAutoFit**.

-

-A strong gravitational lens is a system where two (or more) galaxies align perfectly down our line of sight from Earth

-such that the foreground galaxy's mass deflects the light of a background source galaxy(s).

-

-When the alignment is just right and the lens is massive enough, the background source galaxy appears multiple

-times. The schematic below shows such a system, where light-rays from the source are deflected around the lens galaxy

-to the observer following multiple distinct paths.

-

-

-**Credit: F. Courbin, S. G. Djorgovski, G. Meylan, et al., Caltech / EPFL / WMKO**

-https://www.cosmology.caltech.edu/~george/qsolens/

-

-As an observer, we don't see the source's true appearance (e.g. the red round blob of light). We only observe its

-light after it has been deflected and lensed by the foreground galaxies (e.g. as the two distinct red multiple images

- in the image on the left). We also observe the emission of the foreground galaxy (in blue).

-

-You can read more about gravitational lensing as the following link:

-

-https://en.wikipedia.org/wiki/Gravitational_lens

-

-__PyAutoLens__

-

-Strong gravitational lensing is the original science case that sparked the development of **PyAutoFit**, which is

-a spin off of our astronomy software **PyAutoLens** `https://github.com/Jammy2211/PyAutoLens`.

-

-We'll use **PyAutoLens** to illustrate how the tools we developed with **PyAutoFit** allowed us to

-ensure **PyAutoLens**'s model fitting tools were extensible, easy to maintain and enabled intuitive model composition.

-

-__Multi-Level Models__

-

-Strong lensing is a great case study for using **PyAutoFit**, due to the multi-component nature of how one composes

-a strong lens model. A strong lens model consists of light and mass models of each galaxy in the lens system, where

-each galaxy is a model in itself. The galaxies are combined into one overall "lens model", which in later tutorials

-we will show may also have a Cosmological model.

-

-This example project uses **PyAutoFit** to compose and fit models of a strong lens, in particular highlighting

-**PyAutoFits** multi-level model composition.

-

-__Strong Lens Modeling__

-

-The models are fitted to Hubble Space Telescope imaging of a real strong lens system and will allow us to come up

-with a description of how light is deflected on its path through the Universe.

-

-This project consists of two example scripts / notebooks:

-

- 1) `example_1_intro`: An introduction to strong lensing, and the various parts of the project's source code that are

- used to represent a strong lens galaxy.

-

- 2) `example_2_multi_level_model`: Using **PyAutoFit** to model a strong lens, with a strong emphasis on the

- multi-level model API.

-

-__This Example__

-

-This introduction primarily focuses on what strong lensing is, how we define the individual model-components and fit

-a strong lens model to data. It does not make much use of **PyAutoFit**, but it does provide a clear understanding of

-the model so that **PyAutoFit**'s use in example 2 is clear.

-

-Note that import `import src as cosmo`. The package `src` contains all the code we need for this example Cosmology

-use case, and can be thought of as the source-code you would write to perform model-fitting via **PyAutoFit** for your

-problem of interest.

-

-The module `src/__init__.py` performs a series of imports that are used throughout this lecture to provide convenient

-access to different parts of the source code.

-"""

-

-# %matplotlib inline

-# from pyprojroot import here

-# workspace_path = str(here())

-# %cd $workspace_path

-# print(f"Working Directory has been set to `{workspace_path}`")

-

-import src as cosmo

-import matplotlib.pyplot as plt

-import numpy as np

-from scipy import signal

-from os import path

-

-"""

-__Plot__

-

-We will plot a lot of arrays of 2D data and grids of 2D coordinates in this example, so lets make a convenience

-functions.

-"""

-

-

-def plot_array(array, title=None, norm=None):

- plt.imshow(array, norm=norm)

- plt.colorbar()

- plt.title(title)

- plt.show()

- plt.close()

-

-

-def plot_grid(grid, title=None):

- plt.scatter(x=grid[:, :, 0], y=grid[:, :, 1], s=1)

- plt.title(title)

- plt.show()

- plt.close()

-

-

-"""

-__Data__

-

-First, lets load and plot Hubble Space Telescope imaging data of the strong gravitational lens called SDSSJ2303+1422,

-where this data includes:

-

- 1) The image of the strong lens, which is the data we'll fit.

- 2) The noise in every pixel of this image, which will be used when evaluating the log likelihood.

-

-__Masking__

-

-When fitting 2D imaging data, it is common to apply a mask which removes regions of the image that are not relevant to

-the model fitting.

-

-For example, when fitting the strong lens, we remove the edges of the image where the lens and source galaxy's light is

-not visible.

-

-In the strong lens image and noise map below, you can see this has already been performed, with the edge regions

-blank.

-"""

-dataset_path = path.join("projects", "cosmology", "dataset")

-

-data = np.load(file=path.join(dataset_path, "data.npy"))

-plot_array(array=data, title="Image of Strong Lens SDSSJ2303+1422")

-

-noise_map = np.load(file=path.join(dataset_path, "noise_map.npy"))

-plot_array(array=noise_map, title="Noise Map of Strong Lens SDSSJ2303+1422")

-

-"""

-In the image of the strong lens two distinct objects can be seen:

-

- 1) A central blob of light, corresponding to the foreground lens galaxy whose mass is responsible for deflecting light.

- 2) Two faint arcs of light in the bakcground, which is the lensed background source.

-

-__PSF__

-

-Another component of imaging data is the Point Spread Function (PSF), which describes how the light of the galaxies

-are blurred when they enter the Huble Space Telescope's.

-

-This is because diffraction occurs when the light enters HST's optics, causing the light to smear out. The PSF is

-a two dimensional array that describes this blurring via a 2D convolution kernel.

-

-When fitting the data below and in the `log_likelihood_function`, you'll see that the PSF is used when creating the

-model data. This is an example of how an `Analysis` class may be extended to include additional steps in the model

-fitting procedure.

-"""

-psf = np.load(file=path.join(dataset_path, "psf.npy"))

-plot_array(array=psf, title="Point Spread Function of Strong Lens SDSSJ2303+1422")

-

-

-"""

-__Grid__

-

-To perform strong lensing, we need a grid of (x,y) coordinates which we map throughout the Universe as if their path

-is deflected.

-

-For this, we create a simple 2D grid of coordinates below where the origin is (0.0, 0.0) and the size of

-a pixel is 0.05, which corresponds to the resolution of our image `data`.

-

-This grid only contains (y,x) coordinates within the cricular mask that was applied to the data, as we only need to

-perform ray-tracing within this region.

-"""

-grid = np.load(file=path.join(dataset_path, "grid.npy"))

-

-plot_grid(

- grid=grid,

- title="Cartesian grid of (x,y) coordinates aligned with strong lens dataset",

-)

-

-"""

-__Light Profiles__

-

-Our model of a strong lens must include a description of the light of each galaxy, which we call a "light profile".

-In the source-code of this example project, specifically the module `src/light_profiles.py` you will see there

-are two light profile classes named `LightDeVaucouleurs` and `LightExponential`.

-

-These Python classes are the model components we will use to represent each galaxy's light and they behave analogous

-to the `Gaussian` class seen in other tutorials. The input parameters of their `__init__` constructor (e.g. `centre`,

-`axis_ratio`, `angle`) are their model parameters that may be fitted for by a non-linear search.

-

-These classes also contain functions which create an image from the light profile if an input grid of (x,y) 2D

-coordinates are input, which we use below to create an image of a light profile.

-"""

-light_profile = cosmo.lp.LightExponential(

- centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, intensity=1.0, effective_radius=2.0

-)

-light_image = light_profile.image_from_grid(grid=grid)

-

-plot_array(array=light_image, title="Image of an Exponential light profile.")

-

-"""

-__Mass Profiles__

-

-Our model also includes the mass of the foreground lens galaxy, called a 'mass profile'. In the source-code of the

-example project, specifically the module `src/mass_profiles.py` you will see there is a mass profile class named

-`MassIsothermal`. Like the light profile, this will be a model-component **PyAutoFit** fits via a non-linear search.

-

-The class also contains functions which create the "deflections angles", which describe the angles by which light is

-deflected when it passes the mass of the foreground lens galaxy. These are subtracted from the (y,x) grid above to

-determine the original coordinates of the source galaxy before lensing.

-

-A higher mass galaxy, which bends light more, will have higher values of the deflection angles plotted below:

-"""

-mass_profile = cosmo.mp.MassIsothermal(

- centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.5

-)

-mass_deflections = mass_profile.deflections_from_grid(grid=grid)

-

-plot_array(

- array=mass_deflections[:, :, 0],

- title="X-component of the deflection angles of a Isothermal mass profile.",

-)

-plot_array(

- array=mass_deflections[:, :, 1],

- title="Y-component of the deflection angles of a Isothermal mass profile.",

-)

-

-"""

-__Ray Tracing__

-

-The deflection angles describe how our (x,y) grid of coordinates are deflected by the mass of the foreground galaxy.

-

-We can therefore ray-trace the grid aligned with SDSSJ2303+1422 using the mass profile above and plot a grid of

-coordinates in the reference frame of before their light is gravitationally lensed:

-"""

-traced_grid = grid - mass_deflections

-

-plot_grid(grid=traced_grid, title="Cartesian grid of (x,y) traced coordinates.")

-

-"""

-By inputting this traced grid of (x,y) coordinates into our light profile, we can create an image of the galaxy as if

-it were gravitationally lensed by the mass profile.

-"""

-traced_light_image = light_profile.image_from_grid(grid=traced_grid)

-

-plot_array(

- array=traced_light_image,

- title="Image of a gravitationally lensed Exponential light profile.",

-)

-

-"""

-__Galaxy__

-

-In the `src/galaxy.py` module we define the `Galaxy` class, which is a collection of light and mass profiles at an

-input redshift. For strong lens modeling, we have to use `Galaxy` objects, as the redshifts define how ray-tracing is

-performed.

-

-We create two instances of the `Galaxy` class, representing the lens and source galaxies in a strong lens system.

-"""

-light_profile = cosmo.lp.LightDeVaucouleurs(

- centre=(0.01, 0.01), axis_ratio=0.9, angle=45.0, intensity=0.1, effective_radius=1.0

-)

-mass_profile = cosmo.mp.MassIsothermal(

- centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.8

-)

-lens_galaxy = cosmo.Galaxy(

- redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

-)

-

-light_profile = cosmo.lp.LightExponential(

- centre=(0.1, 0.1), axis_ratio=0.5, angle=80.0, intensity=1.0, effective_radius=5.0

-)

-source_galaxy = cosmo.Galaxy(

- redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

-)

-

-"""

-A galaxy's image is the sum of its light profile images, and its deflection angles are the sum of its mass profile

-deflection angles.

-

-To illustrate this, lets plot the lens galaxy's light profile image:

-"""

-galaxy_image = lens_galaxy.image_from_grid(grid=grid)

-

-plot_array(array=galaxy_image, title="Image of the Lens Galaxy.")

-

-"""

-__Data Fitting__

-

-We can create an overall image of the strong lens by:

-

- 1) Creating an image of the lens galaxy.

- 2) Computing the deflection angles of the lens galaxy.

- 3) Ray-tracing light to the source galaxy reference frame and using its light profile to make its image.

-"""

-lens_image = lens_galaxy.image_from_grid(grid=grid)

-lens_deflections = lens_galaxy.deflections_from_grid(grid=grid)

-

-traced_grid = grid - lens_deflections

-

-source_image = source_galaxy.image_from_grid(grid=traced_grid)

-

-# The grid has zeros at its edges, which produce nans in the model image.

-# These lead to an ill-defined log likelihood, so we set them to zero.

-overall_image = np.nan_to_num(overall_image)

-

-plot_array(array=overall_image, title="Image of the overall Strong Lens System.")

-

-"""

-__Model Data__

-

-To produce the `model_data`, we now convolution the overall image with the Point Spread Function (PSF) of our

-observations. This blurs the image to simulate the telescope optics and pixelization used to observe the image.

-"""

-model_data = signal.convolve2d(overall_image, psf, mode="same")

-

-

-plot_array(array=model_data, title="Image of the overall Strong Lens System.")

-

-"""

-By subtracting this model image from the data, we can create a 2D residual map. This is equivalent to the residual maps

-we made in the 1D Gaussian examples, except for 2D imaging data.

-

-Clearly, the random lens model we used in this example does not provide a good fit to SDSSJ2303+1422.

-"""

-residual_map = data - model_data

-

-plot_array(array=residual_map, title="Residual Map of fit to SDSSJ2303+1422")

-

-"""

-Just like we did for the 1D `Gaussian` fitting examples, we can use the noise-map to compute the normalized residuals

-and chi-squared map of the lens model.

-"""

-# The circular masking introduces zeros at the edge of the noise-map,

-# which can lead to divide-by-zero errors.

-# We set these values to 1.0e8, to ensure they do not contribute to the log likelihood.

-noise_map_fit = noise_map

-noise_map_fit[noise_map == 0] = 1.0e8

-

-normalized_residual_map = residual_map / noise_map_fit

-

-chi_squared_map = (normalized_residual_map) ** 2.0

-

-plot_array(

- array=normalized_residual_map,

- title="Normalized Residual Map of fit to SDSSJ2303+1422",

-)

-plot_array(array=chi_squared_map, title="Chi Squared Map of fit to SDSSJ2303+1422")

-

-"""

-Finally, we can compute the `log_likelihood` of this lens model, which we will use in the next example to fit the

-lens model to data with a non-linear search.

-"""

-chi_squared = np.sum(chi_squared_map)

-noise_normalization = np.sum(np.log(2 * np.pi * noise_map**2.0))

-

-log_likelihood = -0.5 * (chi_squared + noise_normalization)

-

-print(log_likelihood)

-

-"""

-__Wrap Up__

-

-In this example, we introduced the astrophysical phenomena of strong gravitational lensing, and gave an overview of how

-one can create a model for a strong lens system and fit it to imaging data.

-

-We ended by defining the log likelihood of the model-fit, which will form the `log_likelihood_function` of the

-`Analysis` class we use in the next example, which fits this strong lens using **PyAutoFit**.

-

-There is one thing you should think about, how would we translate the above classes (e.g. `LightExponential`,

-`MassIsothermal` and `Galaxy`) using the **PyAutoFit** `Model` and `Collection` objects? The `Galaxy` class contained

-instances of the light and mass profile classes, meaning the standard use of the `Model` and `Collection` objects could

-not handle this.

-

-This is where multi-level models come in, as will be shown in the next example!

-"""

+"""

+Project: Cosmology

+==================

+

+This project uses the astrophysical phenomena of Strong Gravitational Lensing to illustrate multi-level model

+composition and fitting with **PyAutoFit**.

+

+A strong gravitational lens is a system where two (or more) galaxies align perfectly down our line of sight from Earth

+such that the foreground galaxy's mass deflects the light of a background source galaxy(s).

+

+When the alignment is just right and the lens is massive enough, the background source galaxy appears multiple

+times. The schematic below shows such a system, where light-rays from the source are deflected around the lens galaxy

+to the observer following multiple distinct paths.

+

+

+**Credit: F. Courbin, S. G. Djorgovski, G. Meylan, et al., Caltech / EPFL / WMKO**

+https://www.cosmology.caltech.edu/~george/qsolens/

+

+As an observer, we don't see the source's true appearance (e.g. the red round blob of light). We only observe its

+light after it has been deflected and lensed by the foreground galaxies (e.g. as the two distinct red multiple images

+ in the image on the left). We also observe the emission of the foreground galaxy (in blue).

+

+You can read more about gravitational lensing as the following link:

+

+https://en.wikipedia.org/wiki/Gravitational_lens

+

+__PyAutoLens__

+

+Strong gravitational lensing is the original science case that sparked the development of **PyAutoFit**, which is

+a spin off of our astronomy software **PyAutoLens** `https://github.com/Jammy2211/PyAutoLens`.

+

+We'll use **PyAutoLens** to illustrate how the tools we developed with **PyAutoFit** allowed us to

+ensure **PyAutoLens**'s model fitting tools were extensible, easy to maintain and enabled intuitive model composition.

+

+__Multi-Level Models__

+

+Strong lensing is a great case study for using **PyAutoFit**, due to the multi-component nature of how one composes

+a strong lens model. A strong lens model consists of light and mass models of each galaxy in the lens system, where

+each galaxy is a model in itself. The galaxies are combined into one overall "lens model", which in later tutorials

+we will show may also have a Cosmological model.

+

+This example project uses **PyAutoFit** to compose and fit models of a strong lens, in particular highlighting

+**PyAutoFits** multi-level model composition.

+

+__Strong Lens Modeling__

+

+The models are fitted to Hubble Space Telescope imaging of a real strong lens system and will allow us to come up

+with a description of how light is deflected on its path through the Universe.

+

+This project consists of two example scripts / notebooks:

+

+ 1) `example_1_intro`: An introduction to strong lensing, and the various parts of the project's source code that are

+ used to represent a strong lens galaxy.

+

+ 2) `example_2_multi_level_model`: Using **PyAutoFit** to model a strong lens, with a strong emphasis on the

+ multi-level model API.

+

+__This Example__

+

+This introduction primarily focuses on what strong lensing is, how we define the individual model-components and fit

+a strong lens model to data. It does not make much use of **PyAutoFit**, but it does provide a clear understanding of

+the model so that **PyAutoFit**'s use in example 2 is clear.

+

+Note that import `import src as cosmo`. The package `src` contains all the code we need for this example Cosmology

+use case, and can be thought of as the source-code you would write to perform model-fitting via **PyAutoFit** for your

+problem of interest.

+

+The module `src/__init__.py` performs a series of imports that are used throughout this lecture to provide convenient

+access to different parts of the source code.

+"""

+

+# from autoconf import setup_notebook; setup_notebook()

+

+import src as cosmo

+import matplotlib.pyplot as plt

+import numpy as np

+from scipy import signal

+from os import path

+

+"""

+__Plot__

+

+We will plot a lot of arrays of 2D data and grids of 2D coordinates in this example, so lets make a convenience

+functions.

+"""

+

+

+def plot_array(array, title=None, norm=None):

+ plt.imshow(array, norm=norm)

+ plt.colorbar()

+ plt.title(title)

+ plt.show()

+ plt.close()

+

+

+def plot_grid(grid, title=None):

+ plt.scatter(x=grid[:, :, 0], y=grid[:, :, 1], s=1)

+ plt.title(title)

+ plt.show()

+ plt.close()

+

+

+"""

+__Data__

+

+First, lets load and plot Hubble Space Telescope imaging data of the strong gravitational lens called SDSSJ2303+1422,

+where this data includes:

+

+ 1) The image of the strong lens, which is the data we'll fit.

+ 2) The noise in every pixel of this image, which will be used when evaluating the log likelihood.

+

+__Masking__

+

+When fitting 2D imaging data, it is common to apply a mask which removes regions of the image that are not relevant to

+the model fitting.

+

+For example, when fitting the strong lens, we remove the edges of the image where the lens and source galaxy's light is

+not visible.

+

+In the strong lens image and noise map below, you can see this has already been performed, with the edge regions

+blank.

+"""

+dataset_path = path.join("projects", "cosmology", "dataset")

+

+data = np.load(file=path.join(dataset_path, "data.npy"))

+plot_array(array=data, title="Image of Strong Lens SDSSJ2303+1422")

+

+noise_map = np.load(file=path.join(dataset_path, "noise_map.npy"))

+plot_array(array=noise_map, title="Noise Map of Strong Lens SDSSJ2303+1422")

+

+"""

+In the image of the strong lens two distinct objects can be seen:

+

+ 1) A central blob of light, corresponding to the foreground lens galaxy whose mass is responsible for deflecting light.

+ 2) Two faint arcs of light in the bakcground, which is the lensed background source.

+

+__PSF__

+

+Another component of imaging data is the Point Spread Function (PSF), which describes how the light of the galaxies

+are blurred when they enter the Huble Space Telescope's.

+

+This is because diffraction occurs when the light enters HST's optics, causing the light to smear out. The PSF is

+a two dimensional array that describes this blurring via a 2D convolution kernel.

+

+When fitting the data below and in the `log_likelihood_function`, you'll see that the PSF is used when creating the

+model data. This is an example of how an `Analysis` class may be extended to include additional steps in the model

+fitting procedure.

+"""

+psf = np.load(file=path.join(dataset_path, "psf.npy"))

+plot_array(array=psf, title="Point Spread Function of Strong Lens SDSSJ2303+1422")

+

+

+"""

+__Grid__

+

+To perform strong lensing, we need a grid of (x,y) coordinates which we map throughout the Universe as if their path

+is deflected.

+

+For this, we create a simple 2D grid of coordinates below where the origin is (0.0, 0.0) and the size of

+a pixel is 0.05, which corresponds to the resolution of our image `data`.

+

+This grid only contains (y,x) coordinates within the cricular mask that was applied to the data, as we only need to

+perform ray-tracing within this region.

+"""

+grid = np.load(file=path.join(dataset_path, "grid.npy"))

+

+plot_grid(

+ grid=grid,

+ title="Cartesian grid of (x,y) coordinates aligned with strong lens dataset",

+)

+

+"""

+__Light Profiles__

+

+Our model of a strong lens must include a description of the light of each galaxy, which we call a "light profile".

+In the source-code of this example project, specifically the module `src/light_profiles.py` you will see there

+are two light profile classes named `LightDeVaucouleurs` and `LightExponential`.

+

+These Python classes are the model components we will use to represent each galaxy's light and they behave analogous

+to the `Gaussian` class seen in other tutorials. The input parameters of their `__init__` constructor (e.g. `centre`,

+`axis_ratio`, `angle`) are their model parameters that may be fitted for by a non-linear search.

+

+These classes also contain functions which create an image from the light profile if an input grid of (x,y) 2D

+coordinates are input, which we use below to create an image of a light profile.

+"""

+light_profile = cosmo.lp.LightExponential(

+ centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, intensity=1.0, effective_radius=2.0

+)

+light_image = light_profile.image_from_grid(grid=grid)

+

+plot_array(array=light_image, title="Image of an Exponential light profile.")

+

+"""

+__Mass Profiles__

+

+Our model also includes the mass of the foreground lens galaxy, called a 'mass profile'. In the source-code of the

+example project, specifically the module `src/mass_profiles.py` you will see there is a mass profile class named

+`MassIsothermal`. Like the light profile, this will be a model-component **PyAutoFit** fits via a non-linear search.

+

+The class also contains functions which create the "deflections angles", which describe the angles by which light is

+deflected when it passes the mass of the foreground lens galaxy. These are subtracted from the (y,x) grid above to

+determine the original coordinates of the source galaxy before lensing.

+

+A higher mass galaxy, which bends light more, will have higher values of the deflection angles plotted below:

+"""

+mass_profile = cosmo.mp.MassIsothermal(

+ centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.5

+)

+mass_deflections = mass_profile.deflections_from_grid(grid=grid)

+

+plot_array(

+ array=mass_deflections[:, :, 0],

+ title="X-component of the deflection angles of a Isothermal mass profile.",

+)

+plot_array(

+ array=mass_deflections[:, :, 1],

+ title="Y-component of the deflection angles of a Isothermal mass profile.",

+)

+

+"""

+__Ray Tracing__

+

+The deflection angles describe how our (x,y) grid of coordinates are deflected by the mass of the foreground galaxy.

+

+We can therefore ray-trace the grid aligned with SDSSJ2303+1422 using the mass profile above and plot a grid of

+coordinates in the reference frame of before their light is gravitationally lensed:

+"""

+traced_grid = grid - mass_deflections

+

+plot_grid(grid=traced_grid, title="Cartesian grid of (x,y) traced coordinates.")

+

+"""

+By inputting this traced grid of (x,y) coordinates into our light profile, we can create an image of the galaxy as if

+it were gravitationally lensed by the mass profile.

+"""

+traced_light_image = light_profile.image_from_grid(grid=traced_grid)

+

+plot_array(

+ array=traced_light_image,

+ title="Image of a gravitationally lensed Exponential light profile.",

+)

+

+"""

+__Galaxy__

+

+In the `src/galaxy.py` module we define the `Galaxy` class, which is a collection of light and mass profiles at an

+input redshift. For strong lens modeling, we have to use `Galaxy` objects, as the redshifts define how ray-tracing is

+performed.

+

+We create two instances of the `Galaxy` class, representing the lens and source galaxies in a strong lens system.

+"""

+light_profile = cosmo.lp.LightDeVaucouleurs(

+ centre=(0.01, 0.01), axis_ratio=0.9, angle=45.0, intensity=0.1, effective_radius=1.0

+)

+mass_profile = cosmo.mp.MassIsothermal(

+ centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.8

+)

+lens_galaxy = cosmo.Galaxy(

+ redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

+)

+

+light_profile = cosmo.lp.LightExponential(

+ centre=(0.1, 0.1), axis_ratio=0.5, angle=80.0, intensity=1.0, effective_radius=5.0

+)

+source_galaxy = cosmo.Galaxy(

+ redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

+)

+

+"""

+A galaxy's image is the sum of its light profile images, and its deflection angles are the sum of its mass profile

+deflection angles.

+

+To illustrate this, lets plot the lens galaxy's light profile image:

+"""

+galaxy_image = lens_galaxy.image_from_grid(grid=grid)

+

+plot_array(array=galaxy_image, title="Image of the Lens Galaxy.")

+

+"""

+__Data Fitting__

+

+We can create an overall image of the strong lens by:

+

+ 1) Creating an image of the lens galaxy.

+ 2) Computing the deflection angles of the lens galaxy.

+ 3) Ray-tracing light to the source galaxy reference frame and using its light profile to make its image.

+"""

+lens_image = lens_galaxy.image_from_grid(grid=grid)

+lens_deflections = lens_galaxy.deflections_from_grid(grid=grid)

+

+traced_grid = grid - lens_deflections

+

+source_image = source_galaxy.image_from_grid(grid=traced_grid)

+

+# The grid has zeros at its edges, which produce nans in the model image.

+# These lead to an ill-defined log likelihood, so we set them to zero.

+overall_image = np.nan_to_num(overall_image)

+

+plot_array(array=overall_image, title="Image of the overall Strong Lens System.")

+

+"""

+__Model Data__

+

+To produce the `model_data`, we now convolution the overall image with the Point Spread Function (PSF) of our

+observations. This blurs the image to simulate the telescope optics and pixelization used to observe the image.

+"""

+model_data = signal.convolve2d(overall_image, psf, mode="same")

+

+

+plot_array(array=model_data, title="Image of the overall Strong Lens System.")

+

+"""

+By subtracting this model image from the data, we can create a 2D residual map. This is equivalent to the residual maps

+we made in the 1D Gaussian examples, except for 2D imaging data.

+

+Clearly, the random lens model we used in this example does not provide a good fit to SDSSJ2303+1422.

+"""

+residual_map = data - model_data

+

+plot_array(array=residual_map, title="Residual Map of fit to SDSSJ2303+1422")

+

+"""

+Just like we did for the 1D `Gaussian` fitting examples, we can use the noise-map to compute the normalized residuals

+and chi-squared map of the lens model.

+"""

+# The circular masking introduces zeros at the edge of the noise-map,

+# which can lead to divide-by-zero errors.

+# We set these values to 1.0e8, to ensure they do not contribute to the log likelihood.

+noise_map_fit = noise_map

+noise_map_fit[noise_map == 0] = 1.0e8

+

+normalized_residual_map = residual_map / noise_map_fit

+

+chi_squared_map = (normalized_residual_map) ** 2.0

+

+plot_array(

+ array=normalized_residual_map,

+ title="Normalized Residual Map of fit to SDSSJ2303+1422",

+)

+plot_array(array=chi_squared_map, title="Chi Squared Map of fit to SDSSJ2303+1422")

+

+"""

+Finally, we can compute the `log_likelihood` of this lens model, which we will use in the next example to fit the

+lens model to data with a non-linear search.

+"""

+chi_squared = np.sum(chi_squared_map)

+noise_normalization = np.sum(np.log(2 * np.pi * noise_map**2.0))

+

+log_likelihood = -0.5 * (chi_squared + noise_normalization)

+

+print(log_likelihood)

+

+"""

+__Wrap Up__

+

+In this example, we introduced the astrophysical phenomena of strong gravitational lensing, and gave an overview of how

+one can create a model for a strong lens system and fit it to imaging data.

+

+We ended by defining the log likelihood of the model-fit, which will form the `log_likelihood_function` of the

+`Analysis` class we use in the next example, which fits this strong lens using **PyAutoFit**.

+

+There is one thing you should think about, how would we translate the above classes (e.g. `LightExponential`,

+`MassIsothermal` and `Galaxy`) using the **PyAutoFit** `Model` and `Collection` objects? The `Galaxy` class contained

+instances of the light and mass profile classes, meaning the standard use of the `Model` and `Collection` objects could

+not handle this.

+

+This is where multi-level models come in, as will be shown in the next example!

+"""

diff --git a/projects/cosmology/example_2_multi_level_model.py b/projects/cosmology/example_2_multi_level_model.py

index e19f75f5..63c2eea5 100644

--- a/projects/cosmology/example_2_multi_level_model.py

+++ b/projects/cosmology/example_2_multi_level_model.py

@@ -1,366 +1,362 @@

-"""

-Project: Cosmology

-==================

-

-This project uses the astrophysical phenomena of Strong Gravitational Lensing to illustrate basic and advanced model

-composition and fitting with **PyAutoFit**. The first tutorial described what a strong gravitational lens is and how

-we build and fit a model of one.

-

-In this example, we use **PyAutoFit**'s multi-level models to compose a strong lens model consisting of a lens and

-source galaxy, and fit it to the data on SDSSJ2303+1422.

-

-__Config Path__

-

-We first set up the path to this projects config files, which is located at `autofit_workspace/projects/cosmology/config`.

-

-This includes the default priors for the lens model, check it out!

-"""

-

-import os

-from os import path

-from autoconf import conf

-

-cwd = os.getcwd()

-config_path = path.join(cwd, "projects", "cosmology", "config")

-conf.instance.push(new_path=config_path)

-

-# %matplotlib inline

-# from pyprojroot import here

-# workspace_path = str(here())

-# %cd $workspace_path

-# print(f"Working Directory has been set to `{workspace_path}`")

-

-import autofit as af

-import src as cosmo

-import matplotlib.pyplot as plt

-import numpy as np

-

-"""

-__Plot__

-

-First, lets again define the plotting convenience functions we used in the previous example.

-"""

-

-

-def plot_array(array, title=None, norm=None):

- plt.imshow(array, norm=norm)

- plt.colorbar()

- plt.title(title)

- plt.show()

- plt.close()

-

-

-def plot_grid(grid, title=None):

- plt.scatter(x=grid[:, :, 0], y=grid[:, :, 1], s=1)

- plt.title(title)

- plt.show()

- plt.close()

-

-

-"""

-__Data__

-

-Now lets load and plot Hubble Space Telescope imaging data of the strong gravitational lens SDSSJ2303+1422.

-"""

-dataset_path = path.join("projects", "cosmology", "dataset")

-

-data = np.load(file=path.join(dataset_path, "data.npy"))

-plot_array(array=data, title="Image of Strong Lens SDSSJ2303+1422")

-

-noise_map = np.load(file=path.join(dataset_path, "noise_map.npy"))

-plot_array(array=noise_map, title="Noise Map of Strong Lens SDSSJ2303+1422")

-

-psf = np.load(file=path.join(dataset_path, "psf.npy"))

-plot_array(array=psf, title="Point Spread Function of Strong Lens SDSSJ2303+1422")

-

-grid = np.load(file=path.join(dataset_path, "grid.npy"))

-

-plot_grid(

- grid=grid,

- title="Cartesian grid of (x,y) coordinates aligned with strong lens dataset",

-)

-

-"""

-__Multi-level Model__

-

-In the previous example, we saw that we can use instances of the light profiles, mass profiles and galaxy objects to

-perform strong lens ray-tracing calculations:

-"""

-light_profile = cosmo.lp.LightDeVaucouleurs(

- centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, intensity=1.0, effective_radius=2.0

-)

-mass_profile = cosmo.mp.MassIsothermal(

- centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.5

-)

-galaxy = cosmo.Galaxy(

- redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

-)

-

-"""

-In this example, we want to perform a model-fit using a non-linear search, where the `Galaxy` is a `Model`, but it

-contains model subcomponents that are its individual light and mass profiles.

-

-Here is a pictoral representation of the model:

-

-

-

-__Model Composition__

-

-How do we compose a strong lens model where a `Galaxy` is a `Model`, but it contains the light and mass profiles

-as `Model` themselves?

-

-We use **PyAutoFit**'s multi-level model composition:

-"""

-lens = af.Model(

- cls=cosmo.Galaxy, # The overall model object uses this input.

- redshift=0.5,

- light_profile_list=[

- af.Model(cosmo.lp.LightDeVaucouleurs)

- ], # These will be subcomponents of the model.

- mass_profile_list=[

- af.Model(cosmo.mp.MassIsothermal)

- ], # These will be subcomponents of the model.

-)

-

-print(lens.info)

-

-"""

-Lets consider what is going on here:

-

- 1) We use a `Model` to create the overall model component. The `cls` input is the `Galaxy` class, therefore the

- overall model that is created is a `Galaxy`.

-

- 2) **PyAutoFit** next inspects whether the key word argument inputs to the `Model` match any of the `__init__`

- constructor arguments of the `Galaxy` class. This determine if these inputs are to be composed as model

- subcomponents of the overall `Galaxy` model.

-

- 3) **PyAutoFit** matches the `light_profile_list` and `mass_profile_list` inputs, noting they are passed as separate

- lists containing the `LightDeVaucouleurs` and `MassIsothermal` class. They are both created as subcomponents of

- the overall `Galaxy` model.

-

- 4) It also matches the `redshift` input, making it a fixed value of 0.5 for the model and not treating it as a

- free parameter.

-

-We can confirm this by printing the `total_free_parameters` of the lens, and noting it is 11 (6 parameters for

-the `LightDeVaucouleurs` and 5 for the `MassIsothermal`).

-"""

-print(lens.total_free_parameters)

-print(lens.light_profile_list[0].total_free_parameters)

-print(lens.mass_profile_list[0].total_free_parameters)

-

-"""

-The `lens` behaves exactly like the model-components we are used to previously. For example, we can unpack its

-individual parameters to customize the model, where below we:

-

- 1) Fix the light and mass profiles to the centre (0.0, 0.0).

- 2) Customize the prior on the light profile `axis_ratio`.

- 3) Fix the `axis_ratio` of the mass profile to 0.8.

-"""

-

-lens.light_profile_list[0].centre = (0.0, 0.0)

-lens.light_profile_list[0].axis_ratio = af.UniformPrior(

- lower_limit=0.7, upper_limit=0.9

-)

-lens.light_profile_list[0].angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

-lens.light_profile_list[0].intensity = af.LogUniformPrior(

- lower_limit=1e-4, upper_limit=1e4

-)

-lens.light_profile_list[0].effective_radius = af.UniformPrior(

- lower_limit=0.0, upper_limit=5.0

-)

-

-lens.mass_profile_list[0].centre = (0.0, 0.0)

-lens.mass_profile_list[0].axis_ratio = 0.8

-lens.mass_profile_list[0].angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

-lens.mass_profile_list[0].mass = af.UniformPrior(lower_limit=0.0, upper_limit=2.0)

-

-print(lens.info)

-

-"""

-__Alternative API__

-

-We can create the `Galaxy` model component with the exact same customization by creating each profile as a `Model` and

-passing these to the galaxy `Model`.

-"""

-light = af.Model(cosmo.lp.LightDeVaucouleurs)

-

-light.centre = af.UniformPrior(lower_limit=-0.05, upper_limit=0.05)

-light.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=0.9)

-light.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

-light.intensity = af.LogUniformPrior(lower_limit=1e-4, upper_limit=1e4)

-light.effective_radius = af.UniformPrior(lower_limit=0.0, upper_limit=5.0)

-

-

-mass = af.Model(cosmo.mp.MassIsothermal)

-

-mass.centre = (0.0, 0.0)

-mass.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=1.0)

-mass.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

-mass.mass = af.UniformPrior(lower_limit=0.0, upper_limit=4.0)

-

-lens = af.Model(

- cosmo.Galaxy, redshift=0.5, light_profile_list=[light], mass_profile_list=[mass]

-)

-

-print(lens.info)

-

-"""

-We can now create a model of our source galaxy using the same API.

-"""

-light = af.Model(cosmo.lp.LightExponential)

-

-light.centre.centre_0 = af.GaussianPrior(mean=0.0, sigma=0.3)

-light.centre.centre_1 = af.GaussianPrior(mean=0.0, sigma=0.3)

-light.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=1.0)

-light.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

-light.intensity = af.LogUniformPrior(lower_limit=1e-4, upper_limit=1e4)

-light.effective_radius = af.UniformPrior(lower_limit=0.0, upper_limit=1.0)

-

-source = af.Model(cosmo.Galaxy, redshift=1.0, light_profile_list=[light])

-

-print(source.info)

-

-"""

-We can now create our overall strong lens model, using a `Collection` in the same way we have seen previously.

-"""

-model = af.Collection(galaxies=af.Collection(lens=lens, source=source))

-

-print(model.info)

-

-"""

-The model contains both galaxies in the strong lens, alongside all of their light and mass profiles.

-

-For every iteration of the non-linear search **PyAutoFit** generates an instance of this model, where all of the

-`LightDeVaucouleurs`, `MassIsothermal` and `Galaxy` parameters of the are determined via their priors.

-

-An example instance is show below:

-"""

-instance = model.instance_from_prior_medians()

-

-print("Strong Lens Model Instance:")

-print("Lens Galaxy = ", instance.galaxies.lens)

-print("Lens Galaxy Light = ", instance.galaxies.lens.profile_list)

-print("Lens Galaxy Light Centre = ", instance.galaxies.lens.profile_list[0].centre)

-print("Lens Galaxy Mass Centre = ", instance.galaxies.lens.mass_profile_list[0].centre)

-print("Source Galaxy = ", instance.galaxies.source)

-

-"""

-We have successfully composed a multi-level model, which we can fit via a non-linear search.

-

-At this point, you should check out the `Analysis` class of this example project, in the

-module `projects/cosmology/src/analysis.py`. This class serves the same purpose that we have seen in the Gaussian 1D

-examples, with the `log_likelihood_function` implementing the calculation we showed in the first tutorial.

-

-The `path_prefix1 and `name` inputs below sepciify the path and folder where the results of the model-fit are stored

-in the output folder `autolens_workspace/output`. Results for this tutorial are writtent to hard-disk, due to the

-longer run-times of the model-fit.

-"""

-

-search = af.DynestyStatic(

- path_prefix=path.join("projects", "cosmology"),

- name="multi_level",

- nlive=50,

- iterations_per_full_update=2500,

-)

-

-analysis = cosmo.Analysis(data=data, noise_map=noise_map, psf=psf, grid=grid)

-

-"""

-If you comment out the code below, you will perform a lens model fit using the model and analysis class for

-this project. However, this model-fit is slow to run, and it isn't paramount that you run it yourself.

-

-The animation below shows a slide-show of the lens modeling procedure. Many lens models are fitted to the data over

-and over, gradually improving the quality of the fit to the data and looking more and more like the observed image.

-

-

-"""

-

-result = search.fit(model=model, analysis=analysis)

-

-"""

-__Extensibility__

-

-This example project highlights how multi-level models can make certain model-fitting problem fully extensible. For

-example:

-

- 1) A `Galaxy` class can be created using any combination of light and mass profiles, because it can wrap their

- `image_from_grid` and `deflections_from_grid` methods as the sum of the individual profiles.

-

- 2) The overall strong lens model can contain any number of `Galaxy`'s, as their methods are used

- to implement the lensing calculations in the `Analysis` class and `log_likelihood_function`.

-

-For problems of this nature, we can design and write code in a way that fully utilizes **PyAutoFit**'s multi-level

-modeling features to compose and fits models of arbitrary complexity and dimensionality.

-

-__Galaxy Clusters__

-

-To illustrate this further, consider the following dataset which is called a "strong lens galaxy cluster":

-

-

-

-For this strong lens, there are many tens of strong lens galaxies as well as multiple background source galaxies.

-

-However, despite it being a significantly more complex system than the single-galaxy strong lens we modeled above,

-our use of multi-level models ensures that we can model such datasets without any additional code development, for

-example:

-

-The lensing calculations in the source code `Analysis` object did not properly account for multiple galaxies

-(called multi-plane ray tracing). This would need to be updated to properly model a galaxy cluster, but this

-tutorial shows how a model can be composed for such a system.

-"""

-lens_0 = af.Model(

- cosmo.Galaxy,

- redshift=0.5,

- light_profile_list=[cosmo.lp.LightDeVaucouleurs],

- mass_profile_list=[cosmo.mp.MassIsothermal],

-)

-

-lens_1 = af.Model(

- cosmo.Galaxy,

- redshift=0.5,

- light_profile_list=[cosmo.lp.LightDeVaucouleurs],

- mass_profile_list=[cosmo.mp.MassIsothermal],

-)

-

-source_0 = af.Model(

- cosmo.Galaxy, redshift=1.0, light_profile_list=[af.Model(cosmo.lp.LightExponential)]

-)

-

-# ... repeat for desired model complexity ...

-

-model = af.Collection(

- galaxies=af.Collection(

- lens_0=lens_0,

- lens_1=lens_1,

- source_0=source_0,

- # ... repeat for desired model complexity ...

- )

-)

-

-print(model.info)

-

-"""

-Here is a pictoral representation of a strong lens cluster as a multi-level model:

-

-

-

-__Wrap Up__

-

-Strong gravitational lensing is a great example of a problem that can be approached using multi-level models.

-

-At the core of this is how there are many different models one could imagine defining which describe the light or mass

-of a galaxy. However, all of these models must derive the same fundamental property in order to fit the data, for

-example the image of a light profile or the deflection angles of the mass profile.

-

-The multi-level nature of strong lensing is not unique, and is commonly found in my Astronomy problems and the

-scientific literature in general. For example Astronomy problems:

-

- - Studies of galaxy structure, which represent the surface brightness distributions of galaxies as sums of Sersic

- profiles (or other parametric equations) to quantify whether they are bulge-like or disk-like.

-

- - Studies of galaxy dynamics, which represent the mass distribution of galaxies as sums of profiles like the Isothermal

- profile.

-

- - Studies of the activate galactic nuclei (AGN) of galaxies, where the different components of the AGN are represented

- as different model components.

-"""

+"""

+Project: Cosmology

+==================

+

+This project uses the astrophysical phenomena of Strong Gravitational Lensing to illustrate basic and advanced model

+composition and fitting with **PyAutoFit**. The first tutorial described what a strong gravitational lens is and how

+we build and fit a model of one.

+

+In this example, we use **PyAutoFit**'s multi-level models to compose a strong lens model consisting of a lens and

+source galaxy, and fit it to the data on SDSSJ2303+1422.

+

+__Config Path__

+

+We first set up the path to this projects config files, which is located at `autofit_workspace/projects/cosmology/config`.

+

+This includes the default priors for the lens model, check it out!

+"""

+

+import os

+from os import path

+from autoconf import conf

+

+cwd = os.getcwd()

+config_path = path.join(cwd, "projects", "cosmology", "config")

+conf.instance.push(new_path=config_path)

+

+# from autoconf import setup_notebook; setup_notebook()

+

+import autofit as af

+import src as cosmo

+import matplotlib.pyplot as plt

+import numpy as np

+

+"""

+__Plot__

+

+First, lets again define the plotting convenience functions we used in the previous example.

+"""

+

+

+def plot_array(array, title=None, norm=None):

+ plt.imshow(array, norm=norm)

+ plt.colorbar()

+ plt.title(title)

+ plt.show()

+ plt.close()

+

+

+def plot_grid(grid, title=None):

+ plt.scatter(x=grid[:, :, 0], y=grid[:, :, 1], s=1)

+ plt.title(title)

+ plt.show()

+ plt.close()

+

+

+"""

+__Data__

+

+Now lets load and plot Hubble Space Telescope imaging data of the strong gravitational lens SDSSJ2303+1422.

+"""

+dataset_path = path.join("projects", "cosmology", "dataset")

+

+data = np.load(file=path.join(dataset_path, "data.npy"))

+plot_array(array=data, title="Image of Strong Lens SDSSJ2303+1422")

+

+noise_map = np.load(file=path.join(dataset_path, "noise_map.npy"))

+plot_array(array=noise_map, title="Noise Map of Strong Lens SDSSJ2303+1422")

+

+psf = np.load(file=path.join(dataset_path, "psf.npy"))

+plot_array(array=psf, title="Point Spread Function of Strong Lens SDSSJ2303+1422")

+

+grid = np.load(file=path.join(dataset_path, "grid.npy"))

+

+plot_grid(

+ grid=grid,

+ title="Cartesian grid of (x,y) coordinates aligned with strong lens dataset",

+)

+

+"""

+__Multi-level Model__

+

+In the previous example, we saw that we can use instances of the light profiles, mass profiles and galaxy objects to

+perform strong lens ray-tracing calculations:

+"""

+light_profile = cosmo.lp.LightDeVaucouleurs(

+ centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, intensity=1.0, effective_radius=2.0

+)

+mass_profile = cosmo.mp.MassIsothermal(

+ centre=(0.01, 0.01), axis_ratio=0.7, angle=45.0, mass=0.5

+)

+galaxy = cosmo.Galaxy(

+ redshift=0.5, light_profile_list=[light_profile], mass_profile_list=[mass_profile]

+)

+

+"""

+In this example, we want to perform a model-fit using a non-linear search, where the `Galaxy` is a `Model`, but it

+contains model subcomponents that are its individual light and mass profiles.

+

+Here is a pictoral representation of the model:

+

+

+

+__Model Composition__

+

+How do we compose a strong lens model where a `Galaxy` is a `Model`, but it contains the light and mass profiles

+as `Model` themselves?

+

+We use **PyAutoFit**'s multi-level model composition:

+"""

+lens = af.Model(

+ cls=cosmo.Galaxy, # The overall model object uses this input.

+ redshift=0.5,

+ light_profile_list=[

+ af.Model(cosmo.lp.LightDeVaucouleurs)

+ ], # These will be subcomponents of the model.

+ mass_profile_list=[

+ af.Model(cosmo.mp.MassIsothermal)

+ ], # These will be subcomponents of the model.

+)

+

+print(lens.info)

+

+"""

+Lets consider what is going on here:

+

+ 1) We use a `Model` to create the overall model component. The `cls` input is the `Galaxy` class, therefore the

+ overall model that is created is a `Galaxy`.

+

+ 2) **PyAutoFit** next inspects whether the key word argument inputs to the `Model` match any of the `__init__`

+ constructor arguments of the `Galaxy` class. This determine if these inputs are to be composed as model

+ subcomponents of the overall `Galaxy` model.

+

+ 3) **PyAutoFit** matches the `light_profile_list` and `mass_profile_list` inputs, noting they are passed as separate

+ lists containing the `LightDeVaucouleurs` and `MassIsothermal` class. They are both created as subcomponents of

+ the overall `Galaxy` model.

+

+ 4) It also matches the `redshift` input, making it a fixed value of 0.5 for the model and not treating it as a

+ free parameter.

+

+We can confirm this by printing the `total_free_parameters` of the lens, and noting it is 11 (6 parameters for

+the `LightDeVaucouleurs` and 5 for the `MassIsothermal`).

+"""

+print(lens.total_free_parameters)

+print(lens.light_profile_list[0].total_free_parameters)

+print(lens.mass_profile_list[0].total_free_parameters)

+

+"""

+The `lens` behaves exactly like the model-components we are used to previously. For example, we can unpack its

+individual parameters to customize the model, where below we:

+

+ 1) Fix the light and mass profiles to the centre (0.0, 0.0).

+ 2) Customize the prior on the light profile `axis_ratio`.

+ 3) Fix the `axis_ratio` of the mass profile to 0.8.

+"""

+

+lens.light_profile_list[0].centre = (0.0, 0.0)

+lens.light_profile_list[0].axis_ratio = af.UniformPrior(

+ lower_limit=0.7, upper_limit=0.9

+)

+lens.light_profile_list[0].angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

+lens.light_profile_list[0].intensity = af.LogUniformPrior(

+ lower_limit=1e-4, upper_limit=1e4

+)

+lens.light_profile_list[0].effective_radius = af.UniformPrior(

+ lower_limit=0.0, upper_limit=5.0

+)

+

+lens.mass_profile_list[0].centre = (0.0, 0.0)

+lens.mass_profile_list[0].axis_ratio = 0.8

+lens.mass_profile_list[0].angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

+lens.mass_profile_list[0].mass = af.UniformPrior(lower_limit=0.0, upper_limit=2.0)

+

+print(lens.info)

+

+"""

+__Alternative API__

+

+We can create the `Galaxy` model component with the exact same customization by creating each profile as a `Model` and

+passing these to the galaxy `Model`.

+"""

+light = af.Model(cosmo.lp.LightDeVaucouleurs)

+

+light.centre = af.UniformPrior(lower_limit=-0.05, upper_limit=0.05)

+light.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=0.9)

+light.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

+light.intensity = af.LogUniformPrior(lower_limit=1e-4, upper_limit=1e4)

+light.effective_radius = af.UniformPrior(lower_limit=0.0, upper_limit=5.0)

+

+

+mass = af.Model(cosmo.mp.MassIsothermal)

+

+mass.centre = (0.0, 0.0)

+mass.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=1.0)

+mass.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

+mass.mass = af.UniformPrior(lower_limit=0.0, upper_limit=4.0)

+

+lens = af.Model(

+ cosmo.Galaxy, redshift=0.5, light_profile_list=[light], mass_profile_list=[mass]

+)

+

+print(lens.info)

+

+"""

+We can now create a model of our source galaxy using the same API.

+"""

+light = af.Model(cosmo.lp.LightExponential)

+

+light.centre.centre_0 = af.GaussianPrior(mean=0.0, sigma=0.3)

+light.centre.centre_1 = af.GaussianPrior(mean=0.0, sigma=0.3)

+light.axis_ratio = af.UniformPrior(lower_limit=0.7, upper_limit=1.0)

+light.angle = af.UniformPrior(lower_limit=0.0, upper_limit=180.0)

+light.intensity = af.LogUniformPrior(lower_limit=1e-4, upper_limit=1e4)

+light.effective_radius = af.UniformPrior(lower_limit=0.0, upper_limit=1.0)

+

+source = af.Model(cosmo.Galaxy, redshift=1.0, light_profile_list=[light])

+

+print(source.info)

+

+"""

+We can now create our overall strong lens model, using a `Collection` in the same way we have seen previously.

+"""

+model = af.Collection(galaxies=af.Collection(lens=lens, source=source))

+

+print(model.info)

+

+"""

+The model contains both galaxies in the strong lens, alongside all of their light and mass profiles.

+

+For every iteration of the non-linear search **PyAutoFit** generates an instance of this model, where all of the

+`LightDeVaucouleurs`, `MassIsothermal` and `Galaxy` parameters of the are determined via their priors.

+

+An example instance is show below:

+"""

+instance = model.instance_from_prior_medians()

+

+print("Strong Lens Model Instance:")

+print("Lens Galaxy = ", instance.galaxies.lens)

+print("Lens Galaxy Light = ", instance.galaxies.lens.profile_list)

+print("Lens Galaxy Light Centre = ", instance.galaxies.lens.profile_list[0].centre)

+print("Lens Galaxy Mass Centre = ", instance.galaxies.lens.mass_profile_list[0].centre)

+print("Source Galaxy = ", instance.galaxies.source)

+

+"""

+We have successfully composed a multi-level model, which we can fit via a non-linear search.

+

+At this point, you should check out the `Analysis` class of this example project, in the

+module `projects/cosmology/src/analysis.py`. This class serves the same purpose that we have seen in the Gaussian 1D

+examples, with the `log_likelihood_function` implementing the calculation we showed in the first tutorial.

+

+The `path_prefix1 and `name` inputs below sepciify the path and folder where the results of the model-fit are stored

+in the output folder `autolens_workspace/output`. Results for this tutorial are writtent to hard-disk, due to the

+longer run-times of the model-fit.

+"""

+

+search = af.DynestyStatic(

+ path_prefix=path.join("projects", "cosmology"),

+ name="multi_level",

+ nlive=50,

+ iterations_per_full_update=2500,

+)

+

+analysis = cosmo.Analysis(data=data, noise_map=noise_map, psf=psf, grid=grid)

+

+"""

+If you comment out the code below, you will perform a lens model fit using the model and analysis class for

+this project. However, this model-fit is slow to run, and it isn't paramount that you run it yourself.

+

+The animation below shows a slide-show of the lens modeling procedure. Many lens models are fitted to the data over

+and over, gradually improving the quality of the fit to the data and looking more and more like the observed image.

+

+

+"""

+

+result = search.fit(model=model, analysis=analysis)

+

+"""

+__Extensibility__

+

+This example project highlights how multi-level models can make certain model-fitting problem fully extensible. For

+example:

+

+ 1) A `Galaxy` class can be created using any combination of light and mass profiles, because it can wrap their

+ `image_from_grid` and `deflections_from_grid` methods as the sum of the individual profiles.

+

+ 2) The overall strong lens model can contain any number of `Galaxy`'s, as their methods are used

+ to implement the lensing calculations in the `Analysis` class and `log_likelihood_function`.

+

+For problems of this nature, we can design and write code in a way that fully utilizes **PyAutoFit**'s multi-level

+modeling features to compose and fits models of arbitrary complexity and dimensionality.

+

+__Galaxy Clusters__

+

+To illustrate this further, consider the following dataset which is called a "strong lens galaxy cluster":

+

+

+

+For this strong lens, there are many tens of strong lens galaxies as well as multiple background source galaxies.

+

+However, despite it being a significantly more complex system than the single-galaxy strong lens we modeled above,

+our use of multi-level models ensures that we can model such datasets without any additional code development, for

+example:

+

+The lensing calculations in the source code `Analysis` object did not properly account for multiple galaxies

+(called multi-plane ray tracing). This would need to be updated to properly model a galaxy cluster, but this

+tutorial shows how a model can be composed for such a system.

+"""

+lens_0 = af.Model(

+ cosmo.Galaxy,

+ redshift=0.5,

+ light_profile_list=[cosmo.lp.LightDeVaucouleurs],

+ mass_profile_list=[cosmo.mp.MassIsothermal],

+)

+

+lens_1 = af.Model(

+ cosmo.Galaxy,

+ redshift=0.5,

+ light_profile_list=[cosmo.lp.LightDeVaucouleurs],

+ mass_profile_list=[cosmo.mp.MassIsothermal],

+)

+

+source_0 = af.Model(

+ cosmo.Galaxy, redshift=1.0, light_profile_list=[af.Model(cosmo.lp.LightExponential)]

+)

+

+# ... repeat for desired model complexity ...

+

+model = af.Collection(

+ galaxies=af.Collection(

+ lens_0=lens_0,

+ lens_1=lens_1,

+ source_0=source_0,

+ # ... repeat for desired model complexity ...

+ )

+)

+

+print(model.info)

+

+"""

+Here is a pictoral representation of a strong lens cluster as a multi-level model:

+

+

+

+__Wrap Up__

+

+Strong gravitational lensing is a great example of a problem that can be approached using multi-level models.

+

+At the core of this is how there are many different models one could imagine defining which describe the light or mass

+of a galaxy. However, all of these models must derive the same fundamental property in order to fit the data, for

+example the image of a light profile or the deflection angles of the mass profile.

+

+The multi-level nature of strong lensing is not unique, and is commonly found in my Astronomy problems and the

+scientific literature in general. For example Astronomy problems:

+

+ - Studies of galaxy structure, which represent the surface brightness distributions of galaxies as sums of Sersic

+ profiles (or other parametric equations) to quantify whether they are bulge-like or disk-like.

+

+ - Studies of galaxy dynamics, which represent the mass distribution of galaxies as sums of profiles like the Isothermal

+ profile.

+

+ - Studies of the activate galactic nuclei (AGN) of galaxies, where the different components of the AGN are represented

+ as different model components.

+"""

diff --git a/run_scripts.sh b/run_scripts.sh

index 6ac5076d..5deb5c50 100644

--- a/run_scripts.sh

+++ b/run_scripts.sh

@@ -13,6 +13,9 @@ NO_RUN_YAML="$SCRIPT_DIR/../PyAutoBuild/autobuild/config/no_run.yaml"

PROJECT_KEY="autofit"

export PYAUTOFIT_TEST_MODE=1

+export PYAUTO_WORKSPACE_SMALL_DATASETS=1

+export PYAUTO_DISABLE_CRITICAL_CAUSTICS=1

+export PYAUTO_FAST_PLOTS=1

# ---------------------------------------------------------------------------

# Build ordered script list: within each directory, start_here.py runs first,

diff --git a/scripts/cookbooks/analysis.py b/scripts/cookbooks/analysis.py

index 184da5d2..91e1ff6a 100644

--- a/scripts/cookbooks/analysis.py

+++ b/scripts/cookbooks/analysis.py

@@ -1,721 +1,717 @@

-"""

-Cookbook: Analysis

-==================

-

-The `Analysis` class is the interface between the data and model, whereby a `log_likelihood_function` is defined

-and called by the non-linear search to fit the model.

-

-This cookbook provides an overview of how to use and extend `Analysis` objects in **PyAutoFit**.

-

-__Contents__

-

- - Example: A simple example of an analysis class which can be adapted for you use-case.

- - Customization: Customizing an analysis class with different data inputs and editing the `log_likelihood_function`.

- - Visualization: Using a `visualize` method so that model-specific visuals are output to hard-disk.

- - Custom Result: Return a custom Result object with methods specific to your model fitting problem.

- - Latent Variables: Adding a `compute_latent_variables` method to the analysis to output latent variables to hard-disk.

- - Custom Output: Add methods which output model-specific results to hard-disk in the `files` folder (e.g. as .json

- files) to aid in the interpretation of results.

-"""

-

-# %matplotlib inline

-# from pyprojroot import here

-# workspace_path = str(here())

-# %cd $workspace_path

-# print(f"Working Directory has been set to `{workspace_path}`")

-

-import json

-import numpy as np

-from os import path

-from typing import Optional, Tuple

-

-import autofit as af

-

-"""

-__Example__

-

-An example simple `Analysis` class, to remind ourselves of the basic structure and inputs.

-

-This can be adapted for your use case.

-"""

-

-

-class Analysis(af.Analysis):

- def __init__(self, data: np.ndarray, noise_map: np.ndarray):

- """

- The `Analysis` class acts as an interface between the data and model in **PyAutoFit**.

-

- Its `log_likelihood_function` defines how the model is fitted to the data and it is called many times by

- the non-linear search fitting algorithm.

-

- In this example the `Analysis` `__init__` constructor only contains the `data` and `noise-map`, but it can be

- easily extended to include other quantities.

-

- Parameters

- ----------

- data

- A 1D numpy array containing the data (e.g. a noisy 1D signal) fitted in the workspace examples.

- noise_map

- A 1D numpy array containing the noise values of the data, used for computing the goodness of fit

- metric, the log likelihood.

- """

- super().__init__()

-

- self.data = data

- self.noise_map = noise_map

-

- def log_likelihood_function(self, instance) -> float:

- """

- Returns the log likelihood of a fit of a 1D Gaussian to the dataset.

-

- The data is fitted using an `instance` of the `Gaussian` class where its `model_data_from`

- is called in order to create a model data representation of the Gaussian that is fitted to the data.

- """

-

- xvalues = np.arange(self.data.shape[0])

-

- model_data = instance.model_data_from(xvalues=xvalues)

-

- residual_map = self.data - model_data

- chi_squared_map = (residual_map / self.noise_map) ** 2.0

- chi_squared = sum(chi_squared_map)

- noise_normalization = np.sum(np.log(2 * np.pi * self.noise_map**2.0))

- log_likelihood = -0.5 * (chi_squared + noise_normalization)

-

- return log_likelihood

-

-

-"""

-An instance of the analysis class is created as follows.

-"""

-dataset_path = path.join("dataset", "example_1d", "gaussian_x1")

-

-"""

-__Dataset Auto-Simulation__

-

-If the dataset does not already exist on your system, it will be created by running the corresponding

-simulator script. This ensures that all example scripts can be run without manually simulating data first.

-"""

-if not path.exists(dataset_path):

- import subprocess

- import sys

-

- subprocess.run(

- [sys.executable, "scripts/simulators/simulators.py"],

- check=True,

- )

-

-data = af.util.numpy_array_from_json(file_path=path.join(dataset_path, "data.json"))

-noise_map = af.util.numpy_array_from_json(

- file_path=path.join(dataset_path, "noise_map.json")

-)

-

-analysis = Analysis(data=data, noise_map=noise_map)

-

-"""

-__Customization__

-

-The `Analysis` class can be fully customized to be suitable for your model-fit.

-

-For example, additional inputs can be included in the `__init__` constructor and used in the `log_likelihood_function`.

-if they are required for your `log_likelihood_function` to work.

-

-The example below includes three additional inputs:

-

- - Instead of inputting a `noise_map`, a `noise_covariance_matrix` is input, which means that corrrlated noise is

- accounted for in the `log_likelihood_function`.

-

- - A `mask` is input which masks the data such that certain data points are omitted from the log likelihood

-

- - A `kernel` is input which can account for certain blurring operations during data acquisition.

-"""

-

-

-class Analysis(af.Analysis):

- def __init__(

- self,

- data: np.ndarray,

- noise_covariance_matrix: np.ndarray,

- mask: np.ndarray,

- kernel: np.ndarray,

- ):

- """

- The `Analysis` class which has had its inputs edited for a different model-fit.

-

- Parameters

- ----------

- data

- A 1D numpy array containing the data (e.g. a noisy 1D signal) fitted in the workspace examples.

- noise_covariance_matrix

- A 2D numpy array containing the noise values and their covariances for the data, used for computing the

- goodness of fit whilst accounting for correlated noise.

- mask

- A 1D numpy array containing a mask, where `True` values mean a data point is masked and is omitted from

- the log likelihood.

- kernel

- A 1D numpy array containing the blurring kernel of the data, used for creating the model data.

- """

- super().__init__()

-

- self.data = data

- self.noise_covariance_matrix = noise_covariance_matrix

- self.mask = mask

- self.kernel = kernel

-

- def log_likelihood_function(self, instance) -> float:

- """

- The `log_likelihood_function` now has access to the `noise_covariance_matrix`, `mask` and `kernel`

- input above.

- """

- print(self.noise_covariance_matrix)

- print(self.mask)

- print(self.kernel)

-

- """

- We do not provide a specific example of how to use these inputs in the `log_likelihood_function` as they are

- specific to your model fitting problem.

-

- The key point is that any inputs required to compute the log likelihood can be passed into the `__init__`

- constructor of the `Analysis` class and used in the `log_likelihood_function`.

- """

-